Inside recent weeks, photos from social media influencers, superstars, and you will political leaders was targeted by users to your X, who can reply to a post away from various other membership and ask Grok to improve a photo that has been common. “I can upload a photo to your Grok Believe and ask to help you place the person in a bikini, and it works,” states the brand new specialist whom checked out the machine on the a man lookin because the a woman. Studies by WIRED, playing with totally free Grok deep nude membership for the their website both in great britain and you may All of us, effectively eliminated dresses away from a couple of pictures of men without any apparent limitations. To your Grok software in britain, when asked to help you strip down a masculine, the brand new application caused a WIRED reporter to go into the newest profiles’ 12 months from birth until the photo is produced. Discover the better deepfake porn generators and you may ai deepfake applications I tested and you may ranked 2025’s finest products for reality, provides, costs, and gratification. “We get it done facing illegal blogs for the X, along with Man Intimate Discipline Issue (CSAM), by eliminating it, permanently suspending accounts, and dealing which have regional governing bodies and you may law enforcement because the expected,” the new account published.

OpenAI to reduce to your front programs to focus on key team, WSJ accounts | deep nude

Whether your’re worried about she or he using undress AI equipment or becoming a sufferer, here are a few tips when planning on taking to guard her or him. Basically, that it rules would be to shelter any picture one’s sexual in the wild. For example people who element naked otherwise partly nude subjects.

Can it work at people images

X’s current DSA transparency statement said that they suspended 89,151 makes up breaking the man sexual exploitation plan amongst the beginning of the April as well as the end from Summer this past year, but hasn’t wrote newer amounts. Most of these getting undressed, or “nudify,” features play with common social networking sites to possess selling, considering Graphika. For instance, time immemorial for the 12 months, what number of website links advertisements getting undressed software enhanced more than dos,400% for the social media, and to your X and you will Reddit, the newest experts said. The services fool around with AI in order to recreate a photograph so the body is nude. In the us, the new Carry it Down Work produces on the internet features, and social networking, responsible for taking down low-consensual deepfakes when questioned to do this. And many states, in addition to Ca and you will Minnesota, has enacted laws and regulations therefore it is illegal to dispersed sexually direct deepfakes.

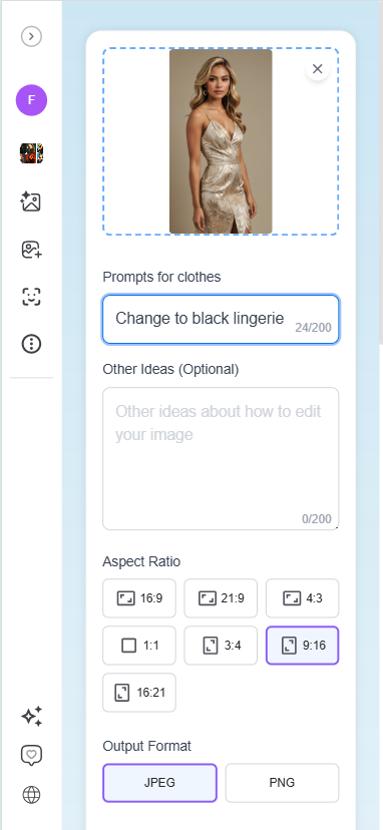

The newest AI clothes-modifying ability blended the fresh top perfectly—colors, lighting, what you experienced uniform. I utilized HeadshotMaster’s AI Outfits Changer to help you modify my personal LinkedIn images, and the efficiency seemed contrary to popular belief top-notch. The new blazer they made matched up my personal pose perfectly, with no you can share with it was edited. Favor people town we should restyle and implement your favorite dresses design without difficulty. Away from casual relaxed appears in order to company clothes, cultural trend, feminine dresses, travel gowns, and much more — HeadshotMaster’s virtual dresser enables you to experiment easily.

Beyond age bracket, Media.io offers full innovative handle. Hone your movies that have based-within the equipment to eradicate backgrounds or items, increase top quality, add background music, compress, move, and more — everything in one seamless workflow, no application modifying necessary. That person exchange efficiency on the SwapFace are extremely realistic and you may smooth, whether it’s to own movies, photographs, otherwise GIFs. Sure, SwapFace now offers totally free credit in order to new registered users, letting you test AI equipment. When your credits can be used upwards, you can get additional loans to discover far more provides. Without difficulty exchange numerous faces in a single photo with this AI-powered Several Deal with Swap tool.

Any office claims it’s assessing photographs of grownups that were published to it, however some images away from young people didn’t meet up with the country’s court concept of kid sexual exploitation matter. “eSafety remains concerned about the new expanding use of generative AI so you can sexualize otherwise exploit people, such as where children are in it,” the fresh representative says. The fresh X Security membership as well as points to the formula up to prohibited blogs. Women that has published photos out of themselves experienced profile answer on it and you can efficiently inquire Grok to turn the newest images to the an excellent “bikini” image. In one such as, multiple X users asked Grok change a photograph of one’s deputy prime minister out of Sweden to display their putting on a swimsuit.

Concurrently, the bill establishes an excellent 10-year statute away from limitations, and that wouldn’t begin up until a guy discovered the new admission up against her or him or turned into 18. The new proposed rules could offer subjects confidentiality defenses who let them have fun with pseudonyms or request the fresh redaction of private advice in the court papers to avoid retraumatization. However, St. Clair’s suit and you may a study published past month because of the Washington Article contradict Musk’s claims. Thanks to what they do in the SVPA, Martone recommended to your statement inside the mass media appearances and as region out of on line campaigns; next burglars put nudify applications in an effort to stifle its support to your expenses. Elon Musk’s AI chatbot Grok features open gaps inside AI defense guardrails by allowing profiles to produce photos of women that naked, showing the brand new urgent need for stronger laws and regulations and you may moral criteria within the generative AI technical. Our ai picture editor has character identity consistent across several made images.

It all depends to the local regulations and if the member of the brand new picture features obviously considering agree. In lots of jurisdictions, generating or discussing direct pictures away from real someone instead permission get break privacy legislation otherwise harassment laws and regulations. Particular work on quick picture age group, anyone else give much more alteration options, while you are a number of excel because of 100 percent free credits, mirror spiders, otherwise broader function establishes. The new bottom line less than shows which devices can get complement additional fool around with cases finest.

It’s not sure exactly how their system often apply location-based prevents on the Grok’s power to revise photos of actual people to create sexualised photos, and you can if or not profiles can circumvent her or him. “You will find implemented technological steps to avoid the fresh Grok membership out of allowing the brand new modifying away from images out of actual members of sharing dresses,” checks out an announcement to your X. One thing to notice, although not, so is this law hinges on the fresh purpose resulting in damage. Therefore, somebody who produces an intimately direct deepfake have to do so to help you humiliate otherwise damage the newest sufferer.

Visualize so you can Video

- Previously consider giving entertaining avatar expertise in your software?

- Pete Kilbane of Town of York Council says he was “shocked” observe the brand new fake photos.

- XAI, the organization at the rear of Grok, failed to answer Prism’s questions regarding the newest common access to Grok to own digital sexual punishment.

- Choose any town we want to restyle and implement your favorite clothes structure effortlessly.

- Our very own desire is on building secure, reliable products which push invention that assist overcome communications traps.

- Since the a functional ai photos editor, permits you to enter a preliminary quick to instantly to improve lights, exchange items, or promote facts.

So it incident arrives while the lawmakers remain focusing on regulations to strengthen on line defense defenses. Moms and dads and you can sufferers out of on the web intimidation provides pushed for tougher laws and regulations, pointing out the new psychological destroy as a result of sexual photos common instead of consent. An AI Outfits Changer are a tool using fake cleverness to exchange or customize gowns within the photographs. They analyzes system present, proportions, and lighting to create the new clothes that look absolute and you will logically suitable.

While you are faked naked photos will likely be uncomfortable and you can possibly profession-impacting for anyone, in certain countries, it may exit girls vulnerable to criminal prosecution or even severe physical violence. Coverage researcher Riana Pfefferkorn said she is shocked X got therefore long so you can deploy the newest Grok security and that the fresh editing have must have been removed whenever the abuse first started. Ofcom told you to your Tuesday it would read the if X got unsuccessful so you can comply with Uk law over the sexual pictures. What’s more, it reiterated one only repaid pages should be able to edit photographs having fun with Grok on the the platform. Dr Daisy Dixon, a great lecturer inside values during the Cardiff School, in past times informed the newest BBC that individuals playing with Grok in order to strip down the girl inside the pictures to your X got leftover her impression “shocked”, “humiliated” and you can dreading for her protection. Within the December, the brand new Light Family granted an executive purchase that enables the brand new Trump management “to check on probably the most onerous and you may excessive laws and regulations emerging on the States one jeopardize so you can stymie AI development” to ensure that the brand new You.S. “wins” the new AI race.